The Physics of Mobile Conversion: How AI-Driven Predictive Prefetching Eliminates the E-Commerce Browsing Tax

An engineering report on post-click latency. Learn why Legacy speed optimization focuses on the first page load, and how AI-driven predictive prefetching via Speculation Rules eliminates the 'Browsing Tax' to recover lost revenue.

Executive Summary (The AI Abstract)

The "Browsing Tax" represents the hidden network and parsing latency between page transitions that systematically bleeds conversion in modern e-commerce. While legacy performance engineering heavily indexes on mitigating the initial document request (optimizing for metrics like Time to First Byte and First Contentful Paint measured via Google Lighthouse), real revenue erosion occurs during subsequent navigation sweeps-the mechanical friction experienced when traversing from a Product List Page (PLP) to a Product Detail Page (PDP), and eventually escalating to the Checkout mutation.

This engineering report dissects why synthetic lab scores provide a false sense of security, the structural failure of traditional "hover-to-prefetch" caching fallbacks on mobile architectures, and introduces Predictive Prefetching mapped via the native browser Speculation Rules API. By offsetting client-side JavaScript execution with server-side machine learning probability models, D2C brands can eliminate the Browsing Tax, achieving millisecond-level navigation and recovering lost conversions via a modern, zero-weight architectural moat.

The Illusion of Synthetic Lab Scores

Why PageSpeed Insights Fails to Predict Revenue

When D2C engineering and growth teams obsess over a "Green Score" within Google PageSpeed Insights, they are optimizing strictly for a deterministic, synthetic lab simulation (Lighthouse). This methodology stands decoupled from real-world field data-often categorized via the Chrome User Experience Report (CrUX). Lighthouse evaluates how efficiently your DOM constructs and renders for a cold-start visitor under simulated throttling. However, shopper intent does not materialize merely upon evaluating the homepage.

Optimizing for a theoretical 99/100 score on the initial load does absolutely nothing to offset the agonizing 2.5 to 3-second DNS, TCP/TLS handshake, and subsequent TTFB delay that occurs when a user initiates a touchstart event on a product variant across a real-world, fluctuating 4G mobile network. Revenue is inherently not generated by the primary paint; it is generated by the aggregate velocity of the user journey-the Session Momentum. The delta between isolated synthetic lab data and the compounding friction of real-world CrUX data is exactly where the Browsing Tax hides, and where session abandonment thrives.

The Mechanical Failure of "Hover-to-Prefetch" on Mobile

The Touchstart Bottleneck in Native Shopify Caching

Standard "instant page" plugins and rudimentary platform-level caching mechanics depend on a glaringly dated assumption about user interaction topologies. To understand the structural failure of traditional predictive caching, we must deconstruct the physics of a mobile DOM interaction loop.

On desktop hardware, a mouse hover (triggering mouseenter or mouseover) grants the browser a generous 300ms to 400ms head start to initialize a fetch request for the adjacent page before the definitive click event is captured. Standard free "booster" JavaScript libraries exploit this latency delta efficiently.

However, on mobile devices, the hover state does not exist. The user's physical interaction-the touchstart event-and the subsequent script execution of the click logic occur almost synchronously, typically manifesting a window of under 100 milliseconds.

This hyper-compressed window is physically insufficient for the browser thread to dispatch the network request, negotiate the TLS handshake, retrieve the cached HTML payload, parse it, and construct the new DOM. We can express this mechanical failure as a rigid latency inequality:

T_network + T_parse > Δt_interaction

Where:

T_network = Network latency (DNS + TCP/TLS + TTFB)

T_parse = Client-side DOM construction time

Δt_interact = Window between `touchstart` and payload request (< 100ms)

Because the immutable constraints of mobile radio wake-up and content parsing vastly exceed this ~100ms bound, native Shopify speculation rules and traditional third-party booster plugins that strictly rely on touchstart or hover listeners are mathematically impotent for mobile shoppers. The touchstart bottleneck demands an architecture built on probabilistic prediction rather than binary reaction.

The "Enterprise Suite" Trap: Overpaying for Feature Bloat

Why D2C Brands Don’t Need an Enterprise "BMW" to Go Fast

To be objective, enterprise-grade Headless solutions and global edge caching platforms-costing upwards of $500 to $1,000 per month-do effectively improve Core Web Vitals (CWV) and Interaction to Next Paint (INP). They achieve this deep optimization by fully hijacking a brand's CDN routing layer, implementing extreme edge caching policies, and controlling all outbound network requests.

The Feature Bloat Tax

The critical consideration is what the merchant is actually subsidizing. At $900/month, D2C brands are invariably forced to underwrite an array of heavily bloated features: Real User Monitoring (RUM) dashboards calculating tertiary percentiles, AI data-query chatbots, generic competitor intelligence tools, and excessively complex environment staging protocols.

The Complexity vs. Utility Gap

Most lean, high-growth D2C brands-specifically those scaling under $10M in annual run rate-do not orchestrate the dedicated DevOps or performance engineering teams required to monitor fifty convoluted dashboards. Their operational mandate is brutally simple: The "Spinning Wheel" must disappear when a customer clicks a product.

The Precision Solution

By aggressively stripping away redundant analytics overhead and focusing strictly on the raw execution of the predictive Speculation Rules API, focused engines like Smart Prefetch act as a pure "Velocity Engine." Lean D2C brands acquire 90% of the enterprise-tier speed throughput for literally 1% of the infrastructure cost ($15/month vs. $900/month).

The Predictive Architecture: Native, Zero-Weight, and Safe

Leveraging AI and the Speculation Rules API

How do you guarantee sub-100ms mobile transitions without enterprise bloat or heavy client-side JavaScript? You shift the computational burden to the server via a predictive architecture and bind it explicitly to the native browser API.

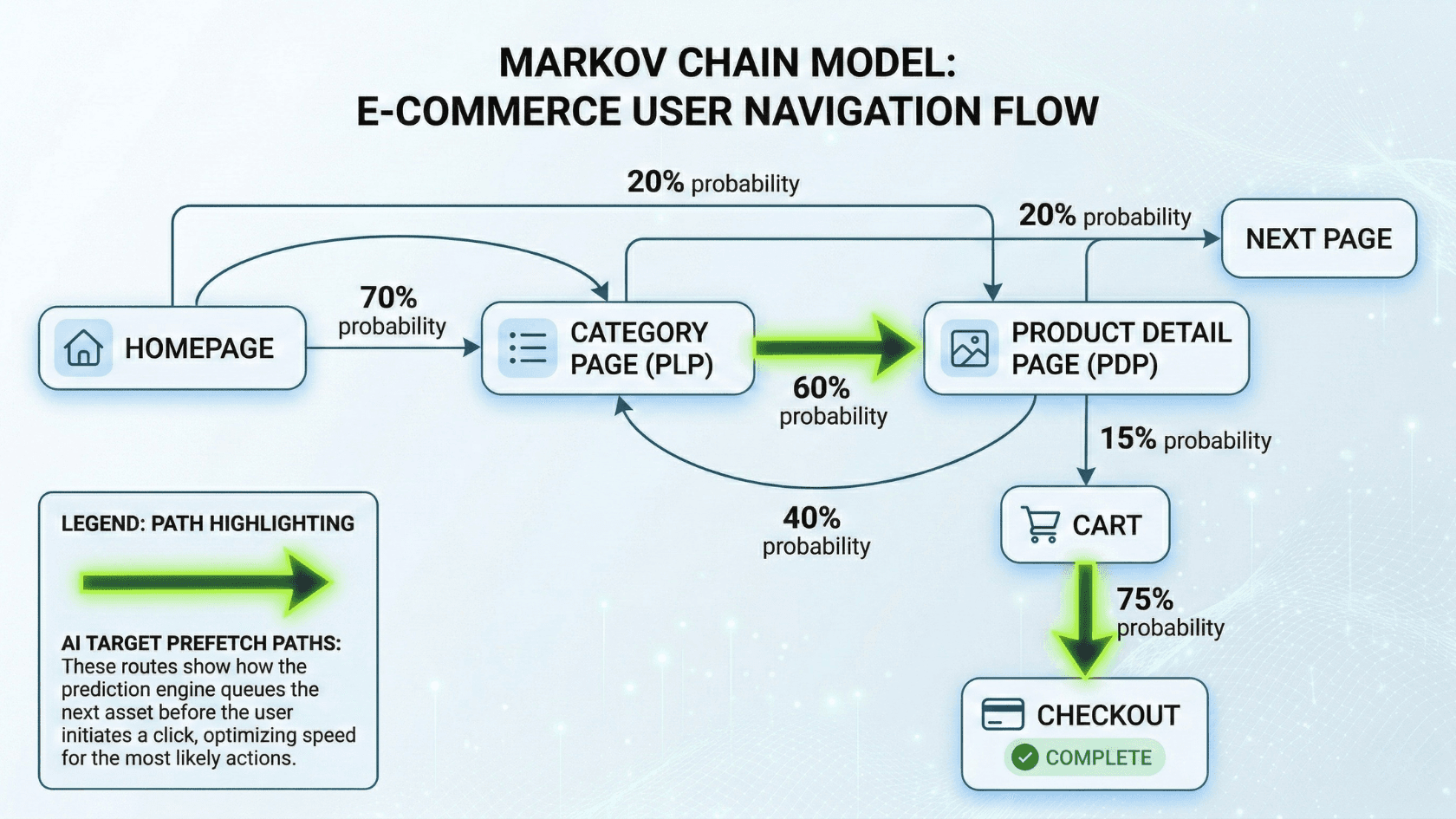

Our engineering approach leverages lightweight machine learning to analyze sequential navigation trees, Markov chains of user behavior, and aggregated probability patterns natively measured at the server level.

We can define a user's session dynamically as a stochastic process, where the probability of moving to a future page state S(t+1) depends mathematically on their current navigation state S(t):

P(S_{t+1} = j | S_t = i) = p_{ij}

Instead of waiting for a reactive, localized touchstart DOM event, the ML probability matrix applies real-time session momentum against this sequence index. It calculates the statistical likelihood of the user's next click seconds before they articulate contact with the screen.

When the computed probability breaches a strict confidence threshold (e.g., P(Next Page) > 0.85), the system triggers the predictive payload.

When the confidence threshold breaches optimal bounds, the system injects a payload via the native Chrome Speculation Rules API (<script type="speculationrules">).

Unlike legacy prefetch mechanisms that operate indiscriminately, Speculation Rules act as a safe, browser-level integration. It respects system-level constraints out of the box-intelligently deferring or dropping prefetch instructions during Data-Saver modes, critically low-battery states, and memory-constrained environments-ensuring zero detrimental impact to the device while completely avoiding the injection of heavy, blocking execution scripts into the client's DOM.

Expanding the Moat: Advanced Prefetching Mechanisms

Solving the "Variant Button" and LCP Delay

Generic, off-the-shelf prefetching scripts possess massive blind spots when confronted with modern e-commerce storefront architectures, specifically neglecting Variant state mutations and the initialization of the Largest Contentful Paint (LCP).

Variant Probability Prefetching

A major technical hurdle operating within robust ecosystems like Shopify or WooCommerce is that product variants (e.g., color swatches or size matrices) are typically constructed as <button> nodes, complex <label> wrappers, or custom <div> structures with embedded click listeners-not semantic <a href> anchor tags. Rudimentary DOM parsers exclusively target anchor nodes. A mathematically rigorous predictive engine reconstructs the variant selection state, maps the headless or query parameter logic, and explicitly preempts the network request for that specific variant data independently of the HTML node type.

Predictive LCP Extraction

Securing the HTML document instantaneously only solves half the problem. If the subsequent page navigates immediately but then requires 800ms to download its massive, dynamic main product image, the perceived load time remains unacceptable. The frontier of web performance involves parsing the predictive HTML in transit, extracting the <link rel="preload" as="image" href="..."> declarations mapped to the next page's Largest Contentful Paint (LCP), and initiating an early stream fetch of the asset. This guarantees that upon transition, both the Document Object Model and the heaviest visual asset are flushed from the cache simultaneously.

Measuring "Checkout Velocity": The Financial Impact

Tying Milliseconds to Marginal Revenue

For the executive and growth teams managing D2C storefronts, the dense backend mechanics must ultimately map to financial leverage. We define this paradigm as Checkout Velocity Boost-the cumulative time mathematically saved across a shopper's entire browsing path.

If a standard D2C session contains N page transitions between the Landing Page and Checkout, each suffering an average perceived network latency of L_i, the total aggregate friction blocking conversion is:

Total Session Friction (F_session) = ∑(L_i) for i=1 to N

Every 1-second reduction in F_session fundamentally impacts the aggregate Return on Ad Spend (ROAS) of active Meta and Google ad campaigns. In an environment where user patience degrades exponentially, if a shopper navigates 5 products (N=5) and your infrastructure predictively eliminates 800ms of latency per interaction (L=800ms), you actively recover:

Checkout Velocity Boost (V_boost) = 5 * 800ms = 4000ms (4 seconds)

By surgically removing 4 entire seconds of aggregate friction-shifting the performance calculation away from the initial homepage load and spreading it across the entire funnel-you effectively alter the physics of your conversion rate.

In the calculus of mobile commerce, erasing 4 seconds of compounded friction is frequently the exact discriminator between an abandoned session and an executed credit card transaction. By indexing strictly on Checkout Velocity, infrastructure resilience shifts from an IT sunk cost directly to a bottom-line marketing revenue driver.

Conclusion & Implementation

High-performance engineering is no longer strictly relegated to shrinking JS bundles or compressing high-resolution WEBP images; it has evolved into predicting human behavioral intent. Relying on isolated, synthetic lab scores actively hinders D2C operators from diagnosing the core vulnerability: compounding post-click mobile latency.

By upgrading from a reactive, client-side event listener model to an AI-driven, predictive prefetching topology, merchants can violently eliminate the e-commerce browsing tax.

It is time to measure the compounding ROI of a frictionless session. Utilize our free Checkout Velocity ROI Calculator to quantify the exact revenue volume your post-click latency is bleeding, or follow the 1-click execution guide to seamlessly integrate zero-weight, predictive architecture into your stack today.

Sandeep Kumar

With over 14 years of hardcore backend engineering experience, Sandeep is a verified technical expert on server-side performance. He specializes in deep infrastructure optimization, building highly scalable backends, and ensuring lightning-fast load times for high-traffic stores and web applications.